Round-up of a data centric architecture

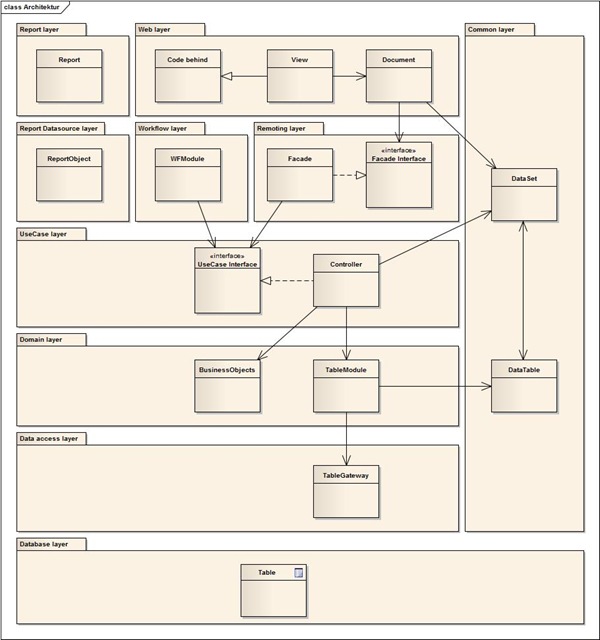

In my last big project we had to use a data centric architecture. There was a learning curve which architecture was the most appropriate one. The result is visible in the picture bellow:

Lets explaining the diagram. The data (or state) is managed by the database layer and the common layer which contains the .Net class DataSet and the DataTables (logic representation of the physical table in the database). This architecture makes use of the patterns Table Module for domain logic and Table Data Gateway for data access logic.

There is no need for DTOs, the state is loaded by the data access layer into the DataSet which is transported through the upper layers. That means, that all layers (except the database layer) know the common layer. Instead of using the DataSet, you could realize that also with POJOs. But that leads in a data centric approach to an anemic domain model.

The domain layer contains the core business logic (domain logic). When you use a data centric architecture, then you couldn’t program in a pure OO-way. All methods have to have a pointer to the data. In this architecture you have possibility to solve that problem: One pointer to the data-structure (here DataSet) and another to the DataRow by a key (for example the primary key). The other possibility is, that you get a DataRow once and pass it around. The problem with this solution is, that you could get a mess with several DataSets.

One important lesson that was learned, was the need of a service layer (Service Layer pattern) which we called use case layer. This layer contains the services, here Controller called. A Controller represents a use case, for example an activity in a workflow process or a simple CRUD window. The responsibility of a Controller is to control and to coordinate what happen in a use case:

- Prepare initial data structure, load data for combo boxes

- Coordinates load or validation of data for additional AJAX calls

- Coordinates the validation of the data structure

- Delegate the persistence of the data structure

The layers report data source, workflow and remoting (contains facades which realize the Remote Facade pattern) are just technical layers. They don’t contain any relevant logic. They just delegate to the use case layer.

Finally, the two layers report and web represent the user interface. The report layer talks only with the report data source layer and the common layer. The web layer talks only with the remoting and common layer and asynchronous with the workflow layer. As web layer technology we used ASP.Net Web Forms.

Using silos

We used the term silo to define what you have to do to realize a use case. There were two major use cases: Simple CRUD dialog or a workflow activity (which have also a dialog).

The silo for a simple CRUD dialog contains: Code behind, View, Document, Facade Interface, Facade, Use Case Interface and a Controller.

The silo for a workflow activity contains: Code behind, View, Document Facade Interface, Facade, WFModule, Use Case Interface and a Controller.

The big advantage of a silo is, that it is clear what you have to do and where what kind of logic has to be. It is also clear that you shouldn’t reference classes of an other silo. This rule helps to minimize the side effects.

But there is also a disadvantage: A silo generate boilerplate code (Interfaces, WFModule, Facade). This leads to the anti pattern accidental complexity. To reduce this problem, you could use wizards in your IDE or some code generation tools.

Make clear decisions where reuse should happen

The use of silo arise the question where the reuse of logic happens. This was an other important lesson learned. It is important that it is clear where the reuse has to happen. In this architecture it happens in the domain layer with the methods of the BusinessObject and TableModule. Those methods are driven by the domain and have specific names, what simplifies the reuse.

Conclusion

After understanding the architecture, we were quite productive. But I’m still a fan of simple and clean OO architectures, for several reasons: avoid accidental complexity, encapsulation, information hiding or inheritance. Most of those reasons are a problem with this architecture.

A nice side effect of this architecture is a good support for testability. You could set up every state what you want because there is no encapsulation. The problem of dependencies between classes still has to be solve with dependency injection and a mocking framework.

If you use a technical environment which gives you strong instrument for a data centric architecture you should consider to use them. Important for a data centric architecture is that you define rules where you place your logic and where the reuse happens.

2 thoughts on “Round-up of a data centric architecture”